2026-03-03

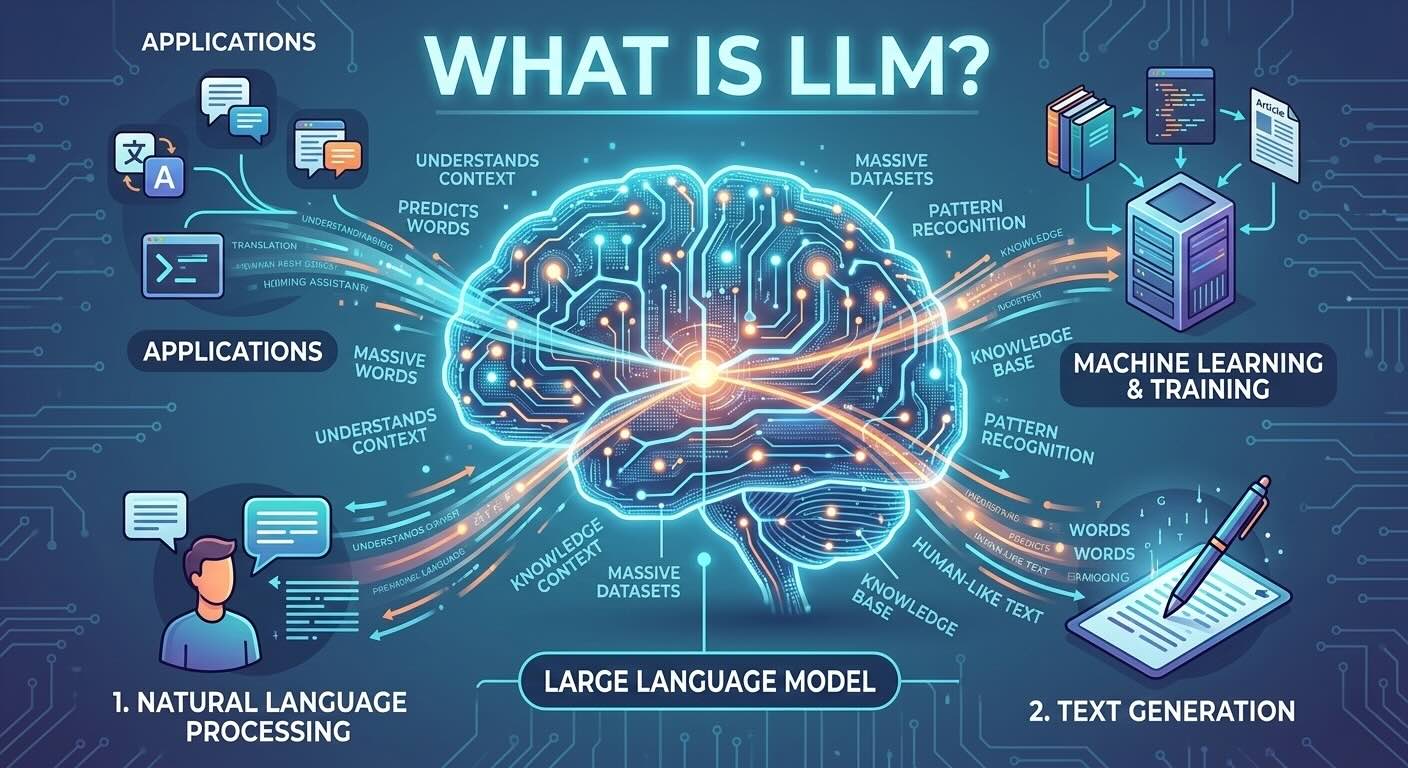

What Is an LLM? How Language Models Actually Work

Introduction

When people talk about ChatGPT, Claude, Gemini, or any AI that writes text — they're talking about large language models, or LLMs.

LLMs are the most influential AI technology of the past few years. They can write essays, answer questions, summarize documents, write code, and carry on conversations.

But what are they, exactly? How do they work?

This article explains it clearly — no technical background required.

What Does "Language Model" Mean?

A language model is an AI system trained to understand and generate text.

More specifically, it's trained to predict what text is likely to come next given what came before.

That single idea — predict the next word — is the foundation of everything.

The Core Idea: Predicting the Next Word

Imagine you're given the beginning of a sentence:

The cat sat on the...

Your brain immediately generates likely continuations:

- mat

- floor

- couch

- roof

You can do this because you've read millions of sentences in your life and built an intuition for how language works.

Language models do essentially the same thing — but learned by processing massive amounts of text rather than living human experiences.

A language model assigns probabilities to possible next words:

- "mat" → 45% likely

- "floor" → 20% likely

- "couch" → 15% likely

- everything else → remaining 20%

By repeatedly predicting the next word (or more precisely, the next "token"), the model generates coherent text — sentence by sentence, paragraph by paragraph.

What Does "Large" Mean?

The "large" in large language models refers to scale — specifically:

-

Parameters: The adjustable numbers inside the model. GPT-4, for example, is estimated to have hundreds of billions of parameters. These are the "weights" the model learned during training.

-

Training data: LLMs are trained on enormous amounts of text — a significant fraction of the publicly available internet, books, code repositories, and more.

-

Compute: Training these models requires thousands of specialized AI chips running for weeks or months.

The "large" matters because scale dramatically improves capability. Bigger models trained on more data tend to produce much more coherent, knowledgeable, and nuanced outputs.

How LLMs Are Built: The Training Process

Training an LLM happens in several stages.

Stage 1: Pre-training (learning from text)

The model is shown enormous quantities of text — web pages, books, code, articles, forum posts.

For each piece of text, it's repeatedly asked to predict the next word. When it gets it wrong, the weights are adjusted. When it gets it right, the weights are reinforced.

After trillions of these corrections across billions of documents, the model learns:

- grammar and syntax

- facts and world knowledge

- how ideas relate to each other

- writing styles and conventions

- reasoning patterns

This stage produces a "base model" — highly capable but not yet useful for conversation.

Stage 2: Fine-tuning (learning to follow instructions)

The base model is then trained specifically to be helpful, harmless, and honest.

Human trainers provide examples of good conversations: a user asks a question, a helpful assistant answers it well.

The model is trained to produce responses that look like those examples.

Stage 3: Reinforcement learning from human feedback (RLHF)

Humans rate model responses — which answers are better? Which are more accurate, more helpful, safer?

These ratings are used to further tune the model toward consistently good responses.

This is why modern LLMs feel much more like helpful assistants and much less like raw text predictors.

What LLMs Know (and Don't Know)

LLMs learn from whatever text they were trained on.

This means:

They know: Facts, concepts, language patterns, reasoning strategies, code syntax, historical events, scientific knowledge — anything that appears in their training data.

They don't know: Information from after their training cutoff date, private information not in their training data, real-time events.

They aren't certain: LLMs don't have a database of verified facts. They predict likely text. This is why they sometimes confidently produce incorrect information — a phenomenon called hallucination.

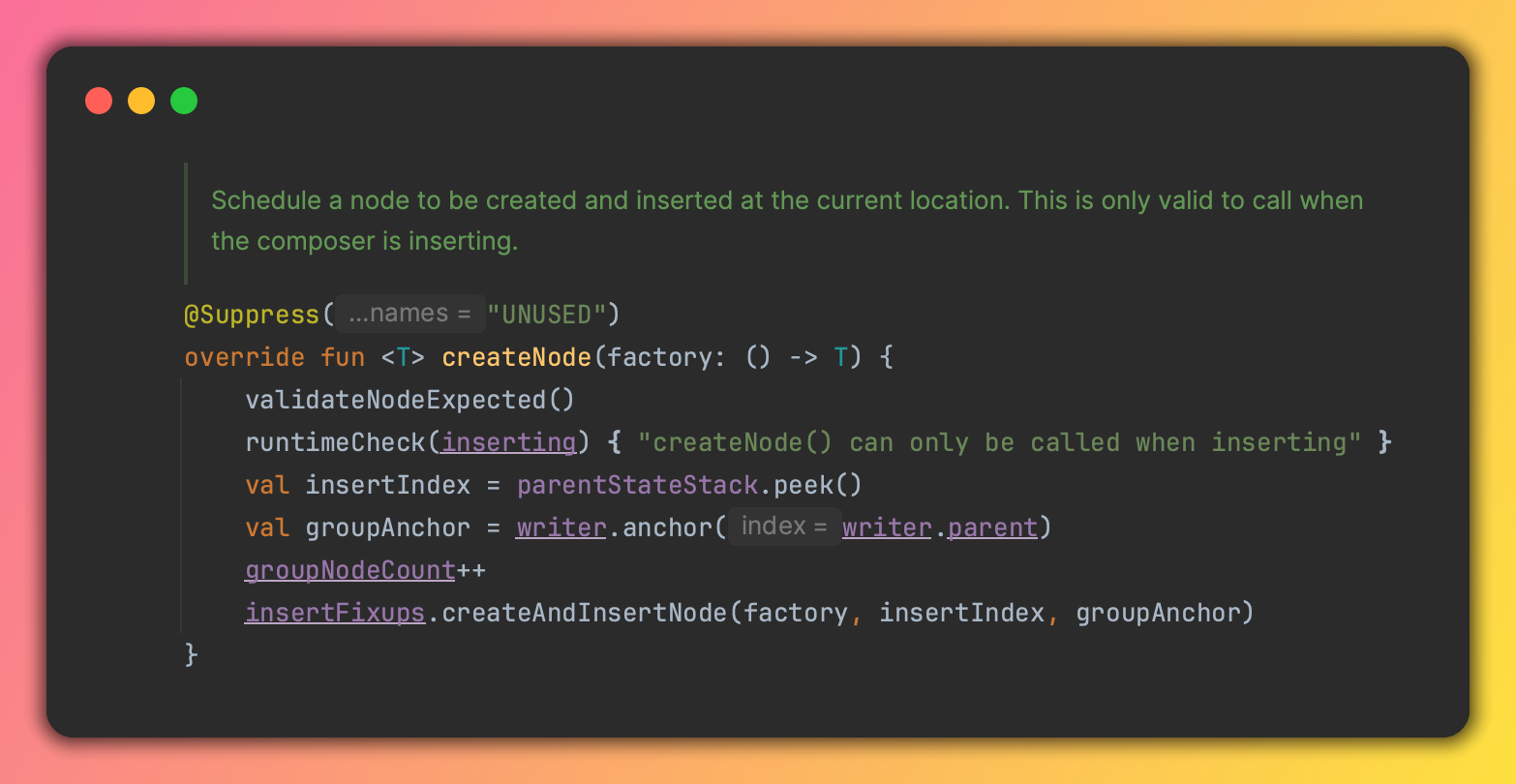

The Transformer Architecture

Modern LLMs are built on a neural network architecture called the Transformer, introduced in a 2017 research paper titled "Attention Is All You Need."

The key innovation of Transformers is a mechanism called attention.

Instead of processing text word by word in a simple sequence (like older models did), Transformers can look at all words at once and learn which words are most relevant to each other.

For example, in the sentence:

The trophy didn't fit in the suitcase because it was too big.

What does "it" refer to — the trophy or the suitcase? Humans resolve this easily from context. Transformers use attention to do the same: they learn to attend to "trophy" when processing "it" based on patterns in the training data.

This ability to capture long-range relationships in text is why Transformers dramatically outperformed earlier language models.

LLMs Are Not Thinking

It's tempting to describe LLMs as "understanding" language. But that's not quite accurate.

LLMs are extremely sophisticated pattern matchers trained on human-generated text.

They don't:

- have beliefs or opinions in a meaningful sense

- understand the world the way humans do

- know what's true vs. false with certainty

- reason through problems from first principles

They produce text that looks like understanding, because they've learned from billions of examples of humans demonstrating understanding.

The results are often remarkable — but knowing this limitation helps you use these tools more effectively.

What LLMs Are Good At

LLMs excel at tasks involving language:

- Writing and editing — drafts, emails, summaries, translations

- Question answering — explaining concepts, providing context

- Code — generating, explaining, and debugging software

- Brainstorming — generating ideas, perspectives, options

- Classification — sorting text, extracting information, labeling

They're less reliable for:

- Tasks requiring precise factual accuracy (always verify)

- Complex multi-step reasoning without guidance

- Anything requiring real-time or private information

Examples of LLMs Today

| Model | Creator | Notes | |---|---|---| | GPT-4 / GPT-4o | OpenAI | Powers ChatGPT | | Claude | Anthropic | Known for safety and nuance | | Gemini | Google DeepMind | Integrated across Google products | | Llama | Meta | Open-source, runs locally | | Mistral | Mistral AI | Efficient open-source models |

All of these follow the same fundamental principles described in this article.

Final Thoughts

Large language models work by predicting the next word — trained on vast amounts of text until that simple task becomes something that looks remarkably like intelligence.

They're not magic. They're not minds. But they're genuinely transformative tools for anyone who works with language, ideas, or information.

Keep learning

- What Is a Neural Network? (No Math Required) — the underlying technology that makes LLMs possible

- How AI Models Are Trained: Data, Compute, and Feedback — a closer look at the training process

- AI Hallucinations: Why AI Makes Things Up — the key limitation of LLMs and how to deal with it

- What Is Generative AI? A Simple Explanation — the broader category LLMs belong to

- Getting Started with ChatGPT — put what you've learned into practice

Continue reading

2026-03-10

The AI Mexican Standoff in Tech

2026-03-10

I Vibe Coded an IntelliJ Plugin in 30 Minutes With Zero Plugin Dev Experience

2026-03-09

Should You Still Learn to Code in 2026?

2026-03-08

AI in 2026 So Far: Key Trends Everyone Should Know

2026-03-07

AI Hallucinations: Why AI Makes Things Up (And What to Do About It)

2026-03-05