2026-02-19

How AI Models Are Trained: Data, Compute, and Feedback

Introduction

You've probably heard that AI models are "trained." But what does that actually mean?

How does a system go from raw text scraped off the internet to something capable of writing essays, answering medical questions, and debugging code?

Training is the process that makes AI possible — and understanding it gives you a much better intuition for what these systems can and can't do.

The Big Picture: What Training Is

Training an AI model is, at its core, a learning process driven by feedback.

The model starts knowing nothing useful. It makes predictions. It gets feedback on how wrong those predictions were. It adjusts. It tries again.

Repeat this billions of times, across trillions of examples, and the model gradually gets very good at its task.

It's not so different from how humans learn skills — except the scale is almost incomprehensible.

The Three Pillars of AI Training

Modern AI training requires three things working together:

1. Data

Data is the raw material of AI learning.

Without data, a model cannot learn anything meaningful. The type, quantity, and quality of training data fundamentally determine what a model knows and how well it performs.

For large language models, training data typically includes:

- Web pages (a significant fraction of the crawlable internet)

- Books and literature

- Code from open-source repositories

- Wikipedia and encyclopedias

- News articles and academic papers

The datasets used to train GPT-scale models contain hundreds of billions to trillions of words.

Data quality matters enormously. Garbage in, garbage out. If training data contains errors, biases, or misinformation, the model will reflect that.

2. Compute

Training AI models requires massive computational power.

At its heart, training is performing billions of mathematical operations — multiplications, additions — across enormous matrices of numbers.

This math happens on GPUs (graphics processing units) and specialized AI accelerators (like Google's TPUs or NVIDIA's H100s).

Training a frontier language model today involves:

- Thousands of high-end AI chips

- Weeks or months of continuous computation

- Enormous electricity and cooling requirements

The cost to train a single major model can run from tens of millions to hundreds of millions of dollars. This is why only a small number of organizations can build frontier models.

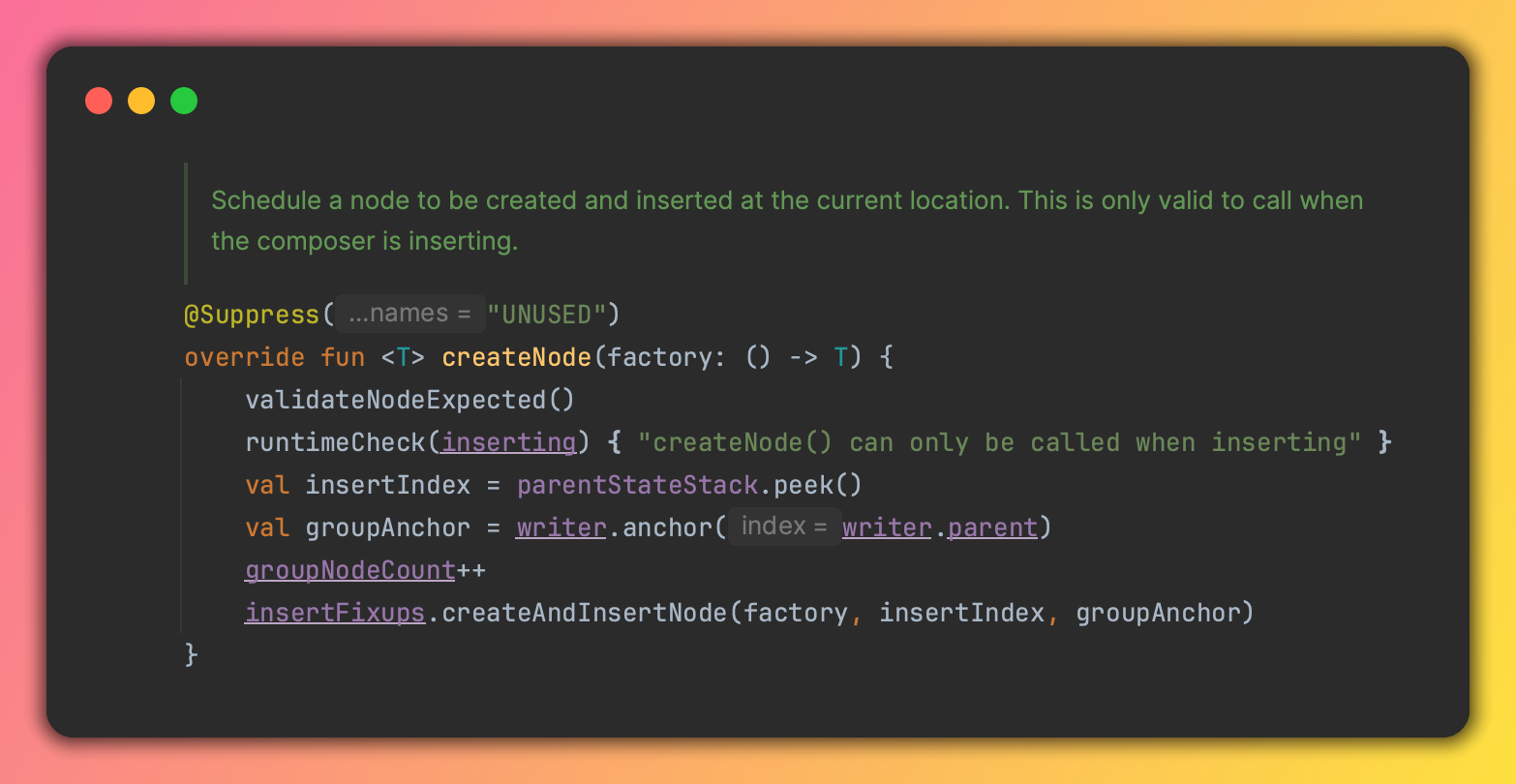

3. Algorithms

The third ingredient is the training algorithm — the procedure that actually adjusts the model based on feedback.

The most important are:

Gradient descent — the core optimization algorithm. After each prediction error, gradient descent calculates which direction to adjust every parameter to reduce future errors.

Backpropagation — the technique that efficiently calculates how much each parameter contributed to the error, making gradient descent practical for large networks.

Loss functions — mathematical measures of how wrong the model's predictions are. The training process minimizes this loss over time.

Stage 1: Pre-Training

For large language models, training happens in stages. The first and most expensive is pre-training.

During pre-training, the model is shown enormous amounts of text and asked to do one thing:

Predict the next word (or token).

Given the text "The Eiffel Tower is located in", predict what comes next.

The model makes a prediction. If it predicted "Paris" — great, small positive reinforcement. If it predicted "Tokyo" — wrong, adjust the weights.

This happens trillions of times across the entire training dataset.

What does the model learn from this seemingly simple task?

Quite a lot. To predict text accurately, the model must learn:

- Grammar and syntax

- Facts about the world (historical events, scientific concepts, geography)

- How arguments are structured

- How code works

- Cause and effect relationships

- The writing styles of different genres

The model learns all of this implicitly, simply by getting good at predicting text.

After pre-training, you have a base model — powerful but not yet tuned to be a helpful assistant.

Stage 2: Fine-Tuning

A pre-trained base model will generate text, but it's not particularly useful. Ask it a question and it might just... generate more questions, because that's what follows questions in training data.

Fine-tuning shapes the model into a more controlled, useful system.

Instruction fine-tuning trains the model on examples of good behavior:

- User asks a question → assistant provides a helpful answer

- User asks for a summary → assistant produces a clean summary

- User asks to debug code → assistant explains the issue and fixes it

The model learns to follow instructions and respond helpfully.

Stage 3: RLHF — Learning From Human Preferences

The final major training stage for conversational AI is Reinforcement Learning from Human Feedback (RLHF).

Here's how it works:

- The model generates multiple different responses to the same prompt.

- Human evaluators rank those responses: which is more helpful? More accurate? Safer?

- A reward model is trained on these human rankings — it learns to predict which responses humans prefer.

- The main model is then trained to produce responses that get high scores from the reward model.

RLHF is why modern AI assistants feel like they're trying to be genuinely helpful rather than just generating plausible text. The models have been shaped by millions of human preference signals.

What Gets Baked Into Models During Training

Training shapes everything about a model's behavior:

Knowledge — everything the model "knows" was in its training data. If something wasn't in the data, the model doesn't know it. If the training data contained errors, the model may have learned those errors.

Biases — training data reflects human-generated text, which reflects human biases, prejudices, and blind spots. These can appear in model outputs.

Capabilities — the model is better at tasks that appeared frequently in training data. Coding, essay writing, and summarization are common. Very niche tasks may be handled poorly.

Cutoff — the model's knowledge stops at its training data cutoff date. It doesn't know about events that happened after training ended.

Values — through fine-tuning and RLHF, models are shaped toward being helpful, harmless, and honest — though this process is imperfect and ongoing.

The Scale That Makes It Work

It's worth stepping back to appreciate the scale involved.

Training data: trillions of words — more text than any human could read in thousands of lifetimes.

Parameters: hundreds of billions of numbers — each one adjusted many times during training.

Training steps: trillions of gradient updates — each one refining the model slightly.

Compute: tens of thousands of GPU hours — running continuously, in parallel, for months.

This extreme scale is not accidental. Researchers found that model capability scales predictably with data and compute — bigger models trained on more data tend to be substantially more capable.

After Training: Inference

Once a model is trained, actually using it is called inference.

Inference is much cheaper than training. Instead of adjusting millions of parameters, you're just running the model forward — feeding in a prompt and generating a response.

This is why services like ChatGPT can serve millions of users simultaneously while training a new model from scratch would cost hundreds of millions of dollars.

Why This Matters for You

Understanding training helps you understand why AI tools behave the way they do:

- Why models have knowledge cutoffs — training data ends at some point

- Why they sometimes make things up — the model predicts likely text, not verified facts

- Why they struggle with very recent events — not in the training data

- Why they sometimes show biases — inherited from training data

- Why bigger models tend to be better — more parameters + more data = more learned knowledge

This understanding makes you a more effective user of AI tools.

Final Thoughts

AI training is the process of showing a model enormous amounts of data, having it make predictions, measuring how wrong those predictions are, and adjusting it slightly — then repeating this billions of times until something genuinely useful emerges.

The result is not a programmed system with hand-written rules. It's a learned system whose capabilities emerged from exposure to human knowledge at scale.

Keep learning

- What Is a Neural Network? (No Math Required) — the underlying structure being trained

- What Is an LLM? How Language Models Actually Work — how language models specifically are built and trained

- AI Hallucinations: Why AI Makes Things Up — a direct consequence of how training works

- What Is Machine Learning? A Plain-English Guide — the broader concept training is part of

Continue reading

2026-03-10

The AI Mexican Standoff in Tech

2026-03-10

I Vibe Coded an IntelliJ Plugin in 30 Minutes With Zero Plugin Dev Experience

2026-03-09

Should You Still Learn to Code in 2026?

2026-03-08

AI in 2026 So Far: Key Trends Everyone Should Know

2026-03-07

AI Hallucinations: Why AI Makes Things Up (And What to Do About It)

2026-03-05