2026-03-01

What Is a Neural Network? (No Math Required)

Introduction

Every time you hear about "deep learning" or a "trained AI model," you're hearing about neural networks.

They power image recognition, language translation, voice assistants, self-driving cars, and large language models like ChatGPT.

Neural networks sound intimidating. The name conjures images of brain anatomy and complex mathematics.

But the core idea is actually quite elegant — and you don't need any math to understand it.

The Brain Analogy (and Its Limits)

Neural networks were loosely inspired by how biological brains work.

Your brain contains roughly 86 billion neurons — nerve cells that communicate with each other through electrical signals. When neurons fire together in patterns, they produce thought, memory, and perception.

AI researchers in the 1940s and 50s wondered: could we build something similar in software?

The result was the artificial neural network — a mathematical system designed to process information in layers, inspired by (but not identical to) biological neural processing.

Important caveat: modern neural networks are not brains. They don't think, feel, or understand. They're mathematical functions that transform inputs into outputs — just organized in a brain-inspired structure.

The Basic Building Block: The Artificial Neuron

A neural network is built from many simple units called artificial neurons (sometimes called nodes or perceptrons).

Each neuron does three things:

- Receives inputs — numbers coming in from elsewhere

- Applies weights — multiplies each input by a number representing its importance

- Produces an output — passes a result to the next layer

Think of a single neuron like a tiny decision-maker:

I'm receiving signals from 10 sources. Some of them matter a lot (high weight), some barely at all (low weight). I'll add everything up and pass along my conclusion.

One neuron alone is not useful. But thousands or millions of them organized into layers become extremely powerful.

Layers: How Networks Are Organized

Neurons in a neural network are organized into layers:

Input layer

The first layer receives the raw data.

For an image recognition system, each neuron in the input layer might represent a single pixel — its brightness, color, position.

Hidden layers

Between the input and output are one or more hidden layers.

These layers transform the raw input into increasingly abstract representations.

- Early hidden layers might detect simple patterns: edges, corners, colors.

- Deeper layers combine those patterns into more complex features: shapes, textures, objects.

The word "deep" in deep learning simply refers to having many hidden layers.

Output layer

The final layer produces the result.

For a dog-vs-cat classifier: two output neurons, one for each category. The one with the highest value wins.

How a Neural Network Learns

The real magic of neural networks is how they learn.

When a neural network is first created, all its weights are set to random values. It's essentially guessing randomly.

Then comes training:

Step 1: Make a prediction

Feed in a training example (an image, a sentence, a data point) and let the network produce an output.

Step 2: Measure the error

Compare the network's output to the correct answer. How far off was it?

This difference is called the loss (or error).

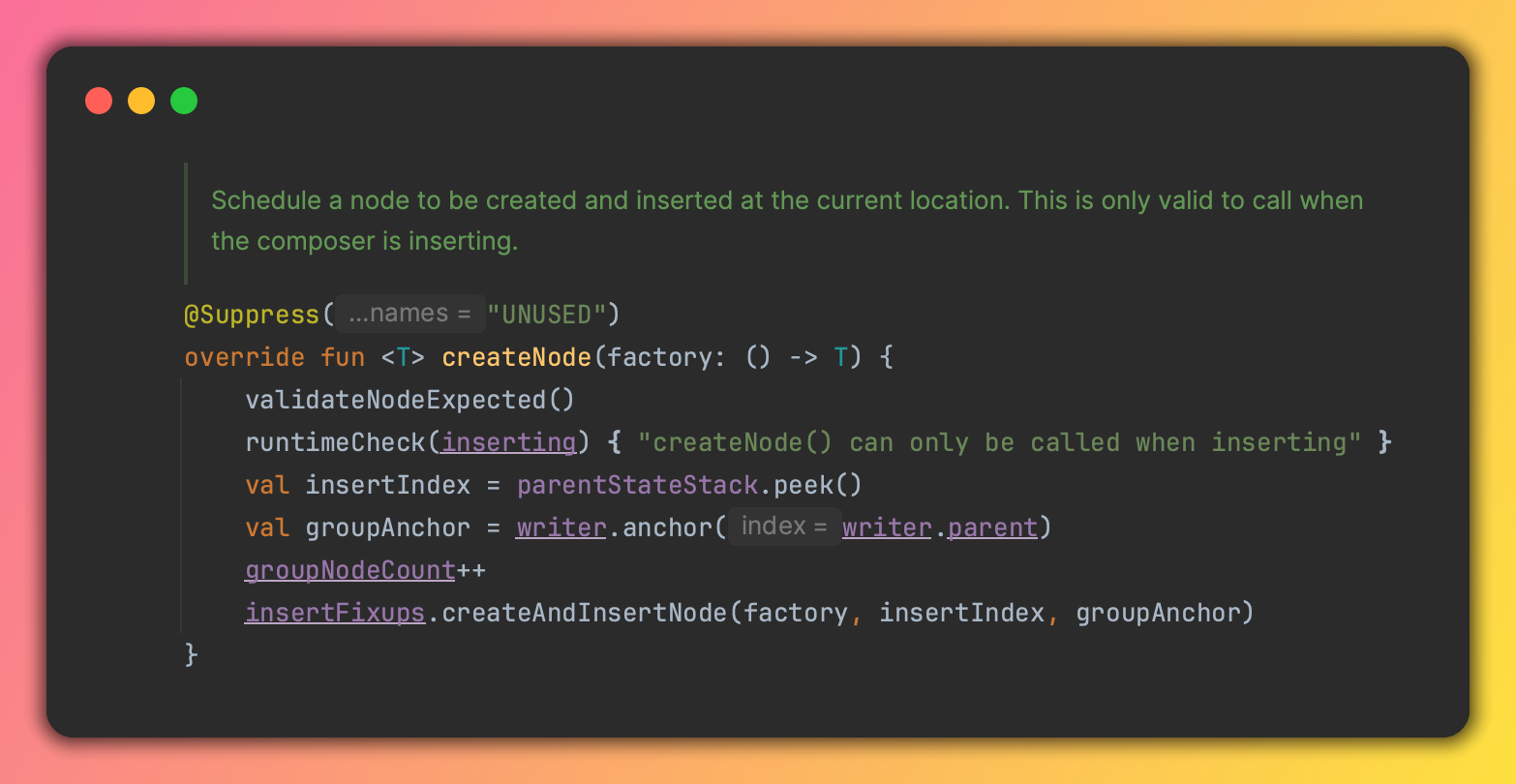

Step 3: Backpropagate

Using an algorithm called backpropagation, the network figures out which weights contributed most to the error — and in which direction.

Step 4: Adjust the weights

Each weight is nudged slightly in the direction that would reduce the error.

This is done with an algorithm called gradient descent — essentially, always moving slightly downhill toward lower error.

Repeat millions of times

Do this for millions of training examples, adjusting the weights a tiny bit each time.

After enough iterations, the weights settle into values that allow the network to make accurate predictions on new, unseen inputs.

An Analogy: Learning to Play Darts

Imagine learning to throw darts at a bullseye.

- Your first throws are random — you haven't calibrated yet.

- You see where each dart lands (the error).

- You adjust your grip, stance, and throwing angle.

- Repeat until you're consistently hitting the bullseye.

Training a neural network works similarly. The "grip and stance" are the weights. The "bullseye distance" is the loss. The adjustments are backpropagation.

Why "Deep" Learning?

Deep learning refers to neural networks with many hidden layers.

The depth matters because each layer learns a more abstract representation of the data.

Example — image recognition:

| Layer | What it learns | |---|---| | Layer 1 | Edges and color gradients | | Layer 2 | Simple shapes (corners, curves) | | Layer 3 | Object parts (eyes, wheels, handles) | | Layer 4 | Full objects (faces, cars, cups) |

By stacking layers, deep networks can learn to understand complex, high-level concepts — not just raw pixels.

This is why deep learning dramatically outperformed earlier approaches on tasks like image recognition and speech processing.

What Neural Networks Are Good At

Neural networks shine at tasks involving:

- Pattern recognition — images, speech, handwriting

- Natural language — translation, summarization, generation

- Prediction — stock movements, weather, customer behavior

- Generation — art, music, text, code

They struggle with:

- Tasks requiring explicit reasoning or logic

- Problems with very little training data

- Situations where you need to explain exactly why a decision was made

Types of Neural Networks

Different architectures have been developed for different tasks:

Convolutional Neural Networks (CNNs) — designed for images. They apply filters that scan across the image to detect patterns regardless of where they appear.

Recurrent Neural Networks (RNNs) — designed for sequences. They have a form of "memory" that helps process text, speech, and time series data.

Transformers — the architecture behind modern language models like ChatGPT. They process entire sequences at once and learn which parts of a sequence to "pay attention to." This is the dominant architecture in AI today.

Final Thoughts

Neural networks are not mysterious black boxes (even though they're sometimes treated that way).

They're mathematical systems made of layers of simple units, trained by showing them millions of examples and repeatedly nudging their weights toward better answers.

This simple idea — combined with vast amounts of data and powerful computers — is responsible for nearly every major AI breakthrough of the past decade.

Keep learning

- What Is Machine Learning? A Plain-English Guide — the broader concept neural networks belong to

- What Is an LLM? How Language Models Actually Work — how the Transformer architecture is used to build language models

- AI vs. Machine Learning vs. Deep Learning: What's the Difference? — placing neural networks and deep learning in context

- How AI Models Are Trained: Data, Compute, and Feedback — a closer look at the training process

Continue reading

2026-03-10

The AI Mexican Standoff in Tech

2026-03-10

I Vibe Coded an IntelliJ Plugin in 30 Minutes With Zero Plugin Dev Experience

2026-03-09

Should You Still Learn to Code in 2026?

2026-03-08

AI in 2026 So Far: Key Trends Everyone Should Know

2026-03-07

AI Hallucinations: Why AI Makes Things Up (And What to Do About It)

2026-03-05