2026-03-07

AI Hallucinations: Why AI Makes Things Up (And What to Do About It)

Introduction

You ask ChatGPT for a list of academic papers on a topic. It provides five — complete with authors, journals, and publication years. You go to look them up.

They don't exist.

Or you ask an AI assistant about a historical event. It gives you a confident, well-structured answer — with a wrong date, a fictional quote, and an event that never happened.

This is called AI hallucination — and it's one of the most important things to understand about modern AI.

What Is an AI Hallucination?

An AI hallucination is when an AI system generates output that is factually incorrect, fabricated, or nonsensical — and presents it with the same confident tone it uses when it's correct.

The word "hallucination" is borrowed from psychology, where it refers to perceiving things that aren't there. In AI, it means generating things that aren't true.

Hallucinations can range from:

- Minor errors — slightly wrong dates, misattributed quotes

- Fabricated details — inventing specific statistics that sound plausible

- Completely made-up entities — citing books, papers, companies, or people that don't exist

- Factual contradictions — saying two incompatible things confidently

The unsettling part is that hallucinations are presented with no visual indicator that they're wrong. The AI doesn't say "I'm not sure about this" — it just states the falsehood as confidently as it states verified facts.

Why Does This Happen?

Understanding why hallucinations occur requires understanding how AI language models work.

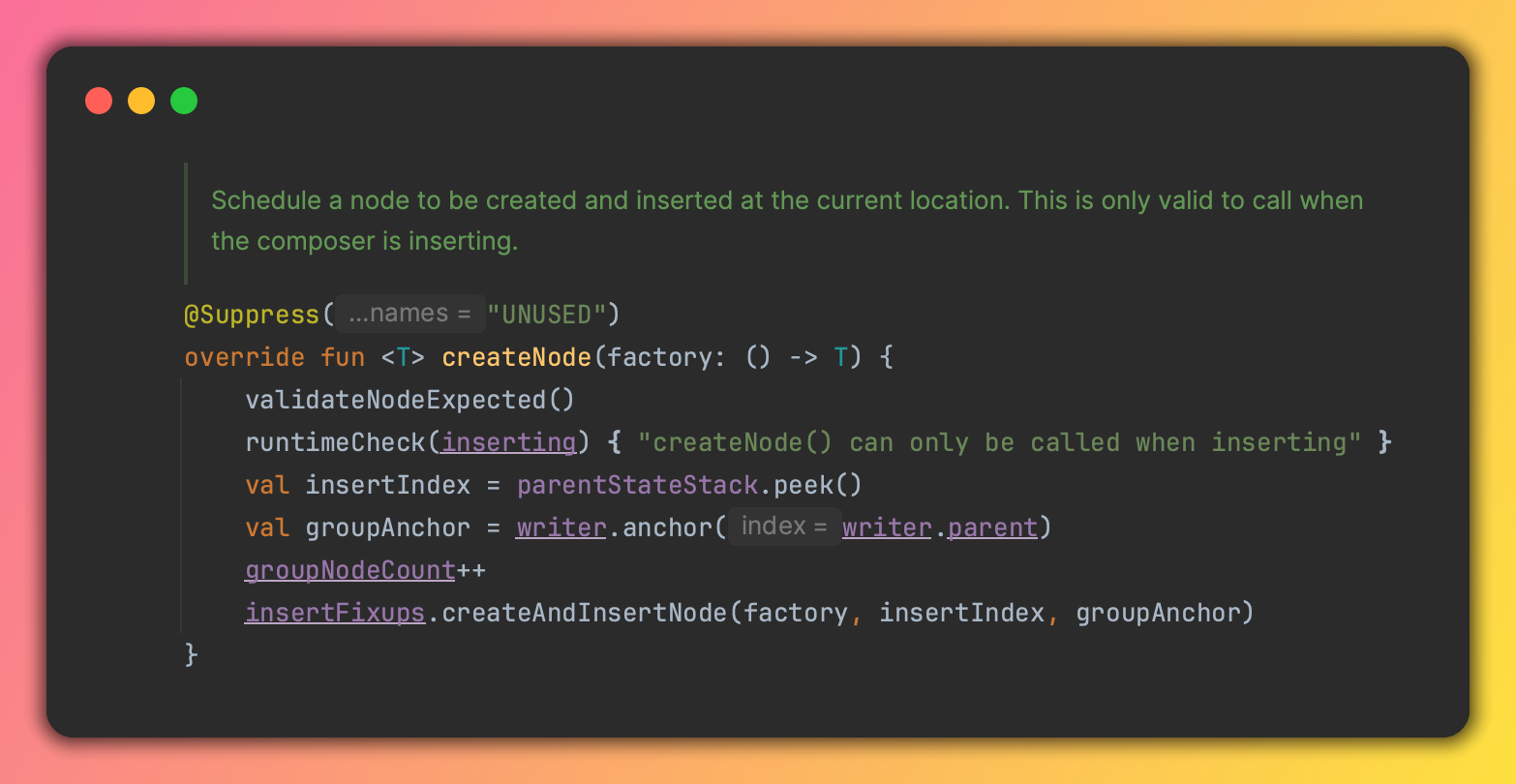

LLMs predict, they don't look things up

Language models like ChatGPT don't have access to a verified database of facts. They don't look up your question in an encyclopedia.

Instead, they predict what text should come next based on patterns learned from training data.

When you ask for a list of research papers, the model generates text that looks like a list of research papers — complete with plausible-sounding titles, authors, and dates. Whether those papers actually exist is a separate question the model isn't directly answering.

The model was trained to sound fluent, not to be accurate

During training, language models are rewarded for generating text that is coherent, relevant, and well-structured. Fluency and correctness are related but not the same thing.

A model can produce extremely fluent, confident-sounding text that is completely wrong.

The model doesn't know what it doesn't know

One of the strangest aspects of hallucinations is that the model lacks strong uncertainty awareness.

Humans say "I'm not sure, but..." or "I think..." when they're uncertain. Language models don't have a built-in mechanism to detect the boundaries of their knowledge.

If a question is about something not well-represented in training data, the model doesn't think "I don't know this." It just generates the most plausible-sounding continuation of the conversation — which may be completely fabricated.

Training data isn't always accurate

The internet contains misinformation, errors, and outdated information. Whatever was in the training data — including its inaccuracies — can show up in model outputs.

Common Types of Hallucinations

Fabricated citations

Asking for academic papers, books, legal cases, or news articles is a high-hallucination zone. Models generate convincing-looking references that don't exist.

Invented statistics

"Studies show that X% of people..." — the percentage might be plausible but invented.

Wrong dates and timelines

Historical events placed in the wrong year, people's birth dates slightly off, chronology scrambled.

False biographical details

Names and accomplishments that are real, but with details swapped between people, or with invented achievements added.

Confident answers to unanswerable questions

Asked about something genuinely unknown, a model will sometimes generate a confident answer rather than acknowledging uncertainty.

Why Models Don't Just Say "I Don't Know"

This is one of the most common questions people have.

The answer is that saying "I don't know" is itself a learned behavior — and it competes with the model's tendency to generate helpful, complete-sounding responses.

Modern models like ChatGPT have been fine-tuned to acknowledge uncertainty more often than their base models would. But this is imperfect. The model doesn't actually know when it knows something versus when it's guessing. It's been trained to produce uncertainty signals in situations that humans marked as uncertain during training — but it can miss cases.

This is an active area of research. Reducing hallucinations reliably is one of the hardest open problems in AI.

How Serious Is the Problem?

It depends heavily on the use case.

Lower risk: Drafting emails, brainstorming ideas, writing creative fiction, summarizing documents you already have. Errors are easy to catch and low-stakes.

Higher risk: Legal research, medical information, academic citations, financial decisions, historical facts, anything requiring precise accuracy.

Hallucinations are a genuine problem, not a minor quirk. There have been real-world cases of lawyers submitting AI-generated legal briefs citing non-existent cases. Doctors finding AI-generated medical summaries with invented drug interactions.

The problem is that hallucinations are hard to catch because they're confidently delivered and often plausible-sounding.

What You Can Do About It

1. Verify anything that matters

Treat AI output on factual matters the way you'd treat information from a well-read friend who occasionally misremembers things.

Useful starting point? Yes. Final word? No.

If you're going to cite it, publish it, or act on it — verify it from a primary source.

2. Ask about uncertainty

Prompt the model explicitly: "How confident are you about this? What parts might be inaccurate?"

This doesn't guarantee honesty, but it can surface uncertainty the model wouldn't volunteer.

3. Avoid high-risk hallucination prompts

Requests for specific citations, statistics, or highly detailed factual claims are where hallucinations most commonly occur.

If you need citations, find them yourself using Google Scholar or a library database, then ask the AI to help you analyze them.

4. Use retrieval-augmented systems when available

Many AI products now use retrieval-augmented generation (RAG) — the AI retrieves actual documents from a database before generating a response, grounding its answers in real sources.

Tools like Perplexity.ai are designed this way. They provide citations you can check. This significantly reduces hallucinations for factual queries.

5. Provide context rather than asking the AI to recall

Instead of "Tell me about the French Revolution," try pasting in a relevant article and asking: "Based on this text, summarize the causes of the French Revolution."

When the AI works from a provided document rather than its memory, hallucinations decrease substantially.

The Bigger Picture

Hallucinations reveal something fundamental about how current AI works:

These systems are language generators, not knowledge databases.

They excel at producing coherent, well-structured text that follows the patterns of human writing. They're remarkable at this. But producing accurate factual content is a different skill — and one that requires either grounding in real sources or more explicit uncertainty modeling.

The field is working on this. Better training techniques, retrieval augmentation, and uncertainty quantification are all active research areas. Models today hallucinate less than models from two years ago.

But for now, healthy skepticism is the right attitude — combined with genuine appreciation for what these tools can do when used well.

Final Thoughts

AI hallucination is not a bug that will be patched in the next version. It's a fundamental consequence of how current language models work — generating plausible text based on patterns, without a verification mechanism for factual accuracy.

Understanding this doesn't mean avoiding AI tools. It means using them smartly: for drafting, brainstorming, explaining, and summarizing — with human verification for anything that needs to be accurate.

Keep learning

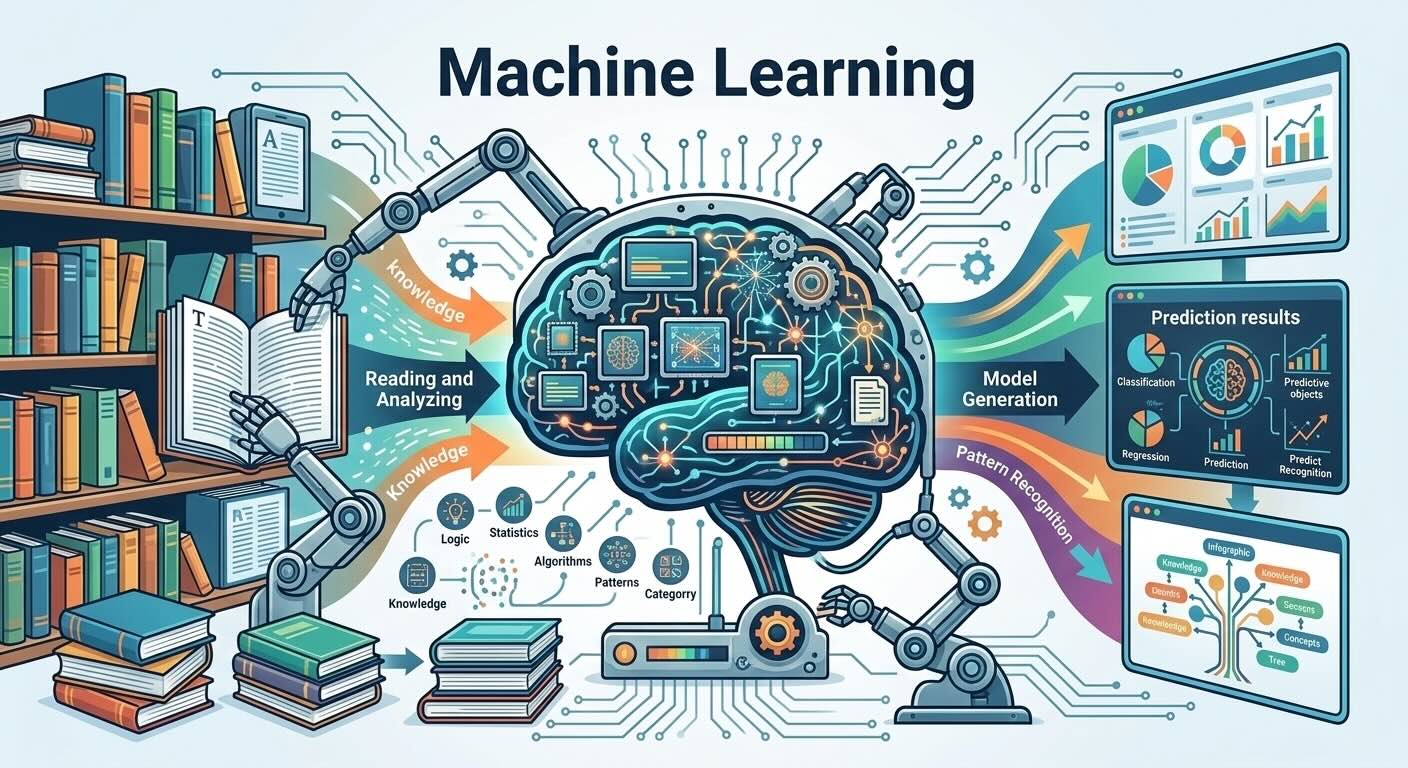

- What Is an LLM? How Language Models Actually Work — understand why hallucinations are structurally inevitable with current AI

- How AI Models Are Trained: Data, Compute, and Feedback — how training shapes (and limits) what models know

- Getting Started with ChatGPT — how to use AI tools effectively given these limitations

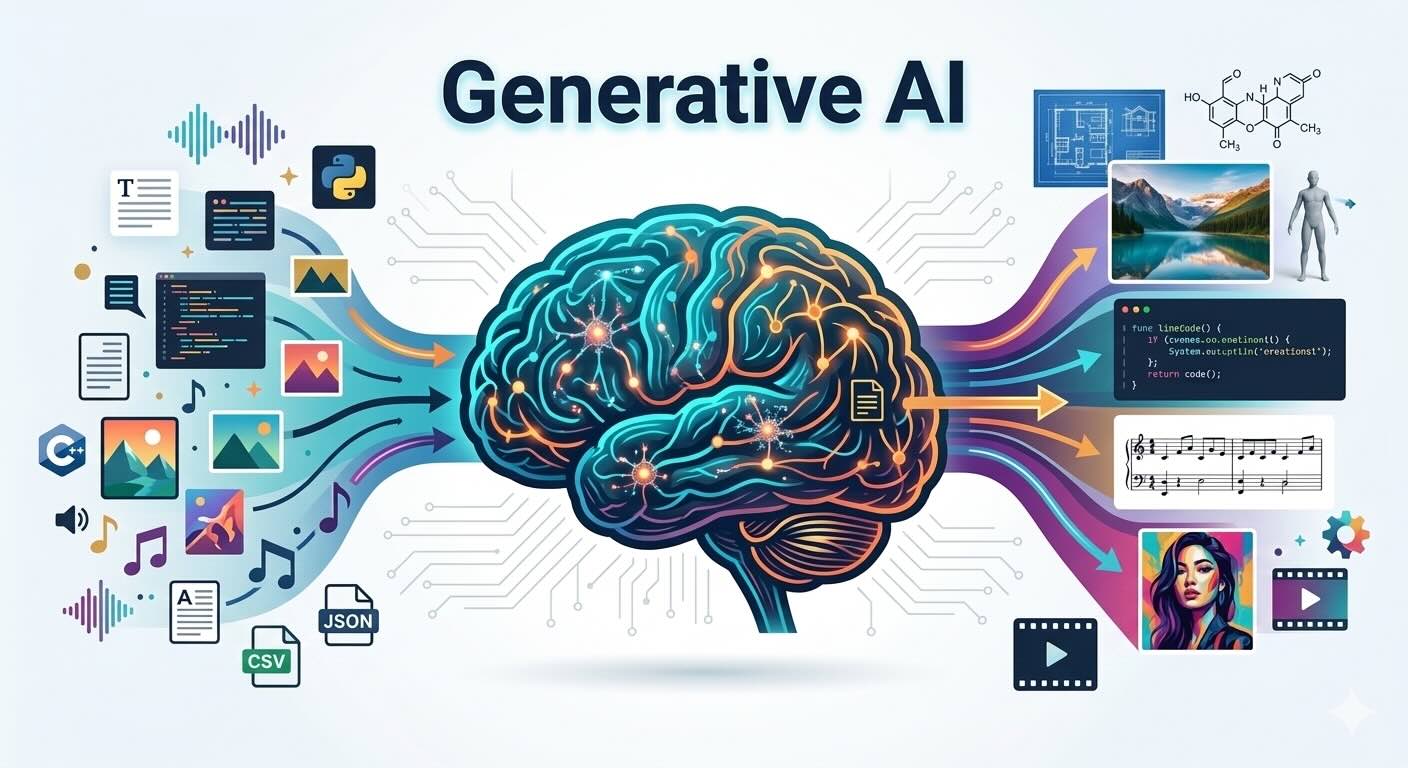

- What Is Generative AI? A Simple Explanation — the broader context for understanding AI's capabilities and limits

Continue reading

2026-03-10

The AI Mexican Standoff in Tech

2026-03-10

I Vibe Coded an IntelliJ Plugin in 30 Minutes With Zero Plugin Dev Experience

2026-03-09

Should You Still Learn to Code in 2026?

2026-03-08

AI in 2026 So Far: Key Trends Everyone Should Know

2026-03-05

What Is Machine Learning? A Plain-English Guide

2026-03-04